Prerequisites

I recommend to understand the following for the prerequisites;

- the concept of MLOps

- Basic knowledge of Kubeflow

KFP Overview

KFP Overview by Ozora Ogino (Author)

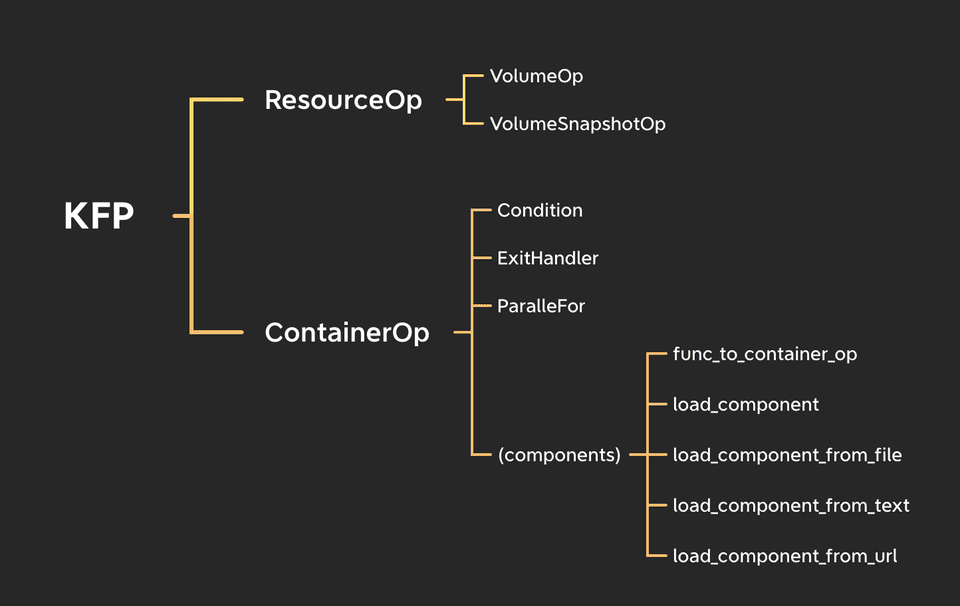

This figure shows the brief overview of Kubeflow Pipeline.

As you know kfp defines the dependency of pipeline as a DAG(Directed Acyclic Graph). Each edge of the DAG is defined by Python object called Operator in KFP context.

KFP mainly have two operators, ContainerOp and ResourceOp.

As the figure shows, KFP provides several way to define your pipeline flexibly. And the most of them are ultimately either of ContainerOp or ResourceOp.

In this article, I explains ContainerOp and ResourceOp.

From my experience, understanding them first are so helpful.

1. ContainerOp

The most of operations used in Kubeflow is ContainerOp.

ContainerOp is kind of an abstraction of kubectl create pod .... It creates a Pod in a specific namespace (default is kubeflow) with given parameters.

The point is, as figure shows, we have a lot of methods such as Conditions, ExitHandler, ParallelFor or components functions, and all of them wraps ContainerOp. It means you can use same method as ContainerOp.

Let’s look an example.

from kfp import dsl

@dsl.pipeline(name='sample for ContainerOp', description='')

def pipeline():

dsl.ContainerOp(

name='sample ContainerOp',

image='busybox',

# k8s's command. NOT docker's CMD!!

command=['echo', 'Hello KFP!'],

)The parameters are pretty simple and literal. If you are familiar with Docker or Kubernetes, it is fast-forward thing.

Of course ContainerOp has more parameters, e.g. init_containers or sidecars.

Additionally ContainerOp provides many methods which enables you to manage it flexibly.

For example, Adding label to the ContainerOp’s pod is;

dsl.ContainerOp(

name='redis-master',

image='redis',

).add_pod_label(name='app', value='redis')I often use set_caching_options, set_gpu_limit or add_toleration.

For more details, please read official doc.

2. ResourceOp

As I said in the previous section, ContainerOp is an abstraction of kubectl create pod .... And ResourceOp is an abstraction of kubectl. It means you can create / delete / apply / patch any Kubernetes resources including custom resources.

Let’s see an example.

from kfp import dsl

# k8s manifest as dict

pod_resource = {

"apiVersion": "v1",

"kind": "Pod",

"spec": {

"containers": [

{

"image": "busybox",

"name": "busybox",

'command': ['echo', 'Hello KFP!']

}

]

},

}

@dsl.pipeline(name='sample for ResourceOp', description='')

def pipeline():

dsl.ResourceOp(

name='sample ResourceOp',

k8s_resource=pod_resource,

action='create',

success_condition='status.phase == Running',

failure_condition='status.phase == Failed',

)Firstly you can define the Pod manifest as a Python dictionary for ResourceOp and choose action from create, delete, apply or patch.

Additionally I strongly recommend to set both of success_condition and failure_condition. If you set nothing, then your ResourceOp will always succeed even if the status is failed. To check the available parameter for the condition, kubectl get pod mypod -o yaml can be helpful. I often use kubectl get -o json pods mypods | jq '...' to check it.

"""

Tips:

Kubeflow official examples use python dictionary

for the manifests.

But I recommend to use define manifests as yaml.

"""

import yaml

from pathlib import Path

pod_resource = yaml.safe_load(Path("pod.yaml").read_text())I recommend to minimize the use of ResourceOp because its behavior is little freaky. For example, the log of pod never appear on Kubeflow UI. If you want to create pods, you should use component functions (e.g. func_to_container_op) or ContainerOp. Please consider them first and make the best decision. I’ll write another article about this topic. Stay tune :)